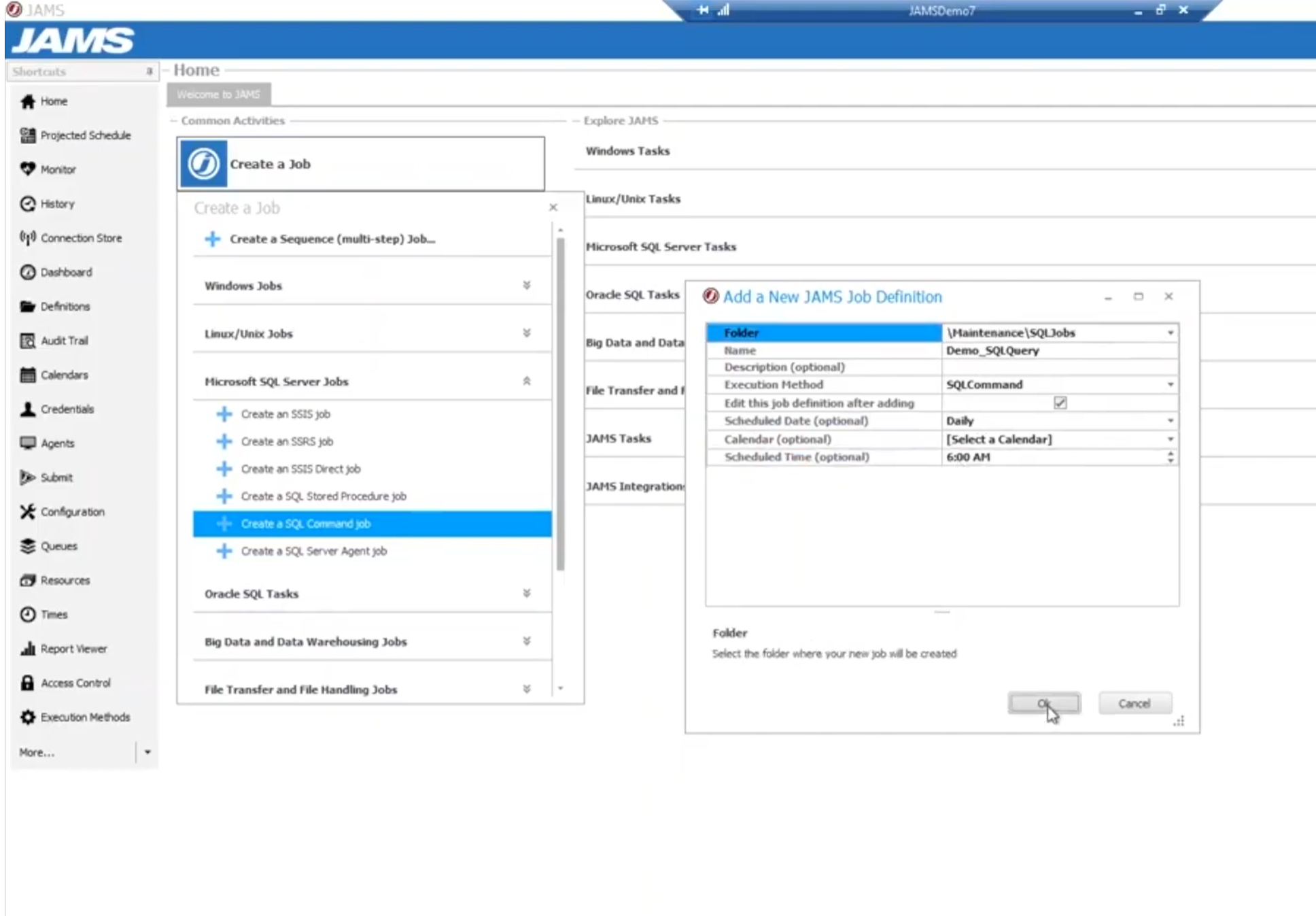

Daily Data Warehouse Refresh

Coordinate extraction from multiple source systems, stage data quality validation, run Informatica transformations, load dimension and fact tables, update materialized views, and refresh BI cubes—with automatic recovery when individual steps fail.